Analytics in the Agentic Age: Surviving the "Synthetic Traffic" Crisis

Deep Kanabar

Head of Strategy

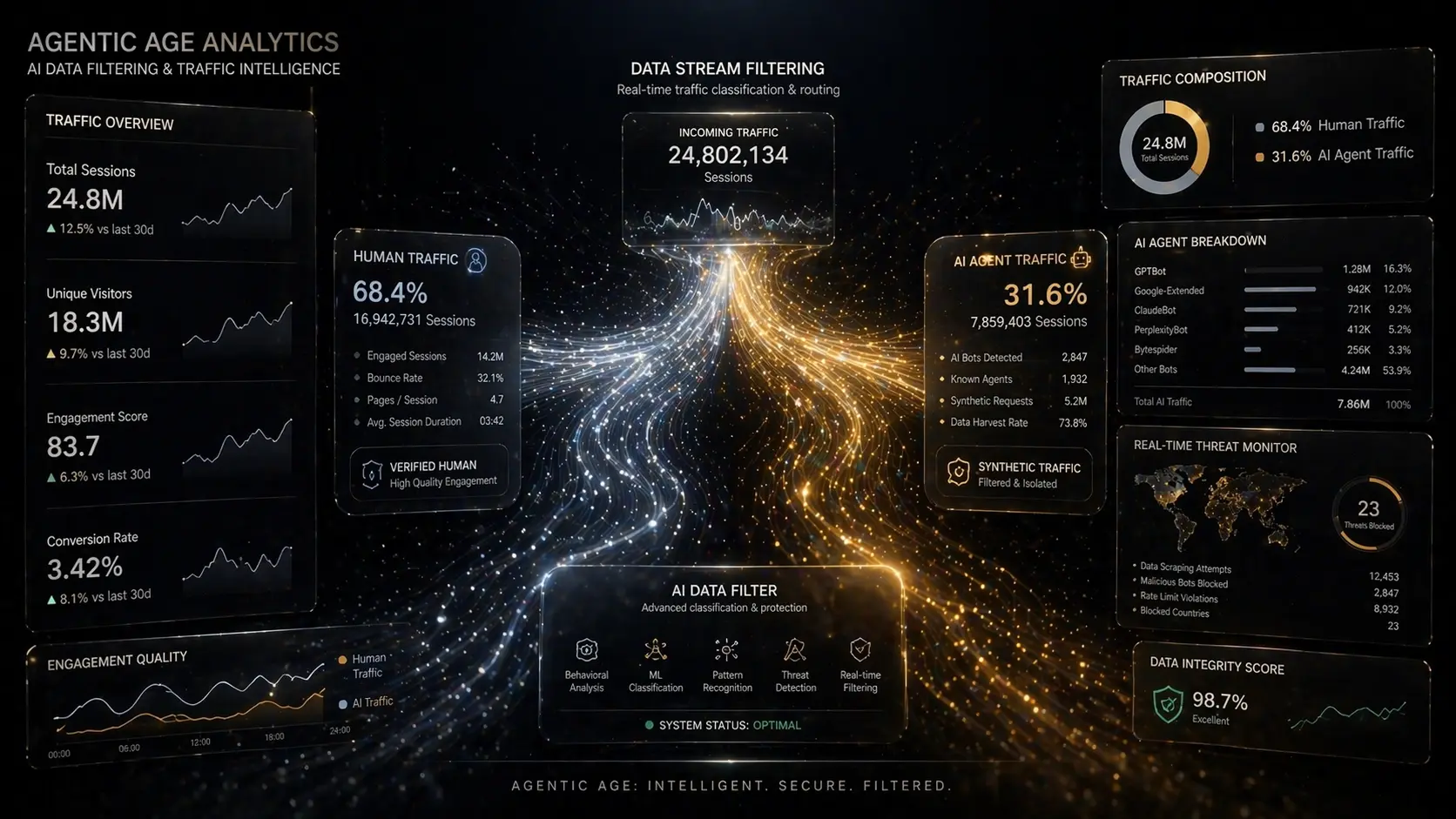

Right now, in boardrooms across the globe, Chief Marketing Officers are staring at their Google Analytics 4 (GA4) dashboards in a state of absolute panic.

The charts show a bizarre, contradictory narrative: "Direct Traffic" is experiencing unprecedented, massive spikes, but average session duration has plummeted to mere milliseconds. Conversion rates on traditional landing pages are in freefall. The immediate assumption is that the website is broken, or worse, that a malicious bot attack is underway.

But the website isn't broken. You are simply witnessing the birth of the Synthetic Web.

In 2026, we have fully entered the Agentic Age. Autonomous AI agents—acting on behalf of human users—are navigating the web, synthesizing answers, scraping pricing tables, and even booking appointments. This non-human activity is generating massive volumes of Synthetic Traffic.

If your agency is still using 2023 analytics frameworks to measure 2026 traffic, your data is compromised. In this comprehensive guide, the ThynkUnicorn data team dismantles the Synthetic Traffic crisis and provides the exact technical framework required to measure, mitigate, and monetize machine-driven web traffic.

Part 1: The Anatomy of "Dark AI Traffic"

To solve the analytics crisis, we must first understand what is actually hitting your servers. We categorize modern non-human traffic into three distinct tiers, collectively known as Dark AI Traffic.

Tier 1: The Answer Engines (The Good)

These are the crawlers dispatched by major LLMs (Large Language Models) to synthesize real-time answers for their users.

Tier 2: The Autonomous Agents (The Transactors)

These are highly specialized AI tools granted permission by users to execute tasks on the Universal Commerce Protocol (UCP).

Tier 3: The Parasitic Scrapers (The Ugly)

These are unlicensed, rogue LLM scrapers attempting to steal your proprietary content to train their own foundation models without offering you any citation or traffic in return.

Part 2: The Death of the "Bounce Rate"

For two decades, "Bounce Rate" and "Average Session Duration" were the holy grail metrics of user engagement. If a user stayed on your page for three minutes, the content was good. If they bounced in three seconds, the content was bad.

In the Agentic Age, this logic is entirely inverted. We must bifurcate our understanding of ROI into two distinct categories: Human ROI and Machine ROI.

The Paradox of Machine Dwell Time

An AI agent does not read like a human. It does not pause to admire your hero image or scroll thoughtfully through your testimonials. It ingests your `llms.txt` file or your `Article` schema in a fraction of a second.

If `GPTBot` visits your site, extracts your proprietary data, and leaves in 0.05 seconds, that is a highly successful interaction. The bot got exactly what it needed to cite you in a ChatGPT response.

If you are judging the quality of your content based on an aggregate session duration that mixes sluggish human reading speeds with lightning-fast machine ingestion, your data is fundamentally poisoned. You will end up "optimizing" pages that are already performing perfectly for AI.

Redefining the Metrics

To survive, growth teams must abandon blended metrics. You must filter your views.

Part 3: The Bifurcated Analytics Framework

How do you separate the humans from the machines? You cannot rely on client-side tracking (like the standard Google Tag Manager snippet). AI agents often do not execute JavaScript, meaning they are completely invisible to standard analytics, or worse, they spoof their environments.

You must move your tracking to the server level. Here is the ThynkUnicorn 3-Step Framework for Agentic Analytics.

Step 1: Mandatory Server-Side Tracking (SST)

Client-side tracking is dead. Browser privacy settings (ITP), ad blockers, and non-rendering AI bots have killed the pixel.

You must implement a Server-Side Google Tag Manager (sGTM) container. When a request hits your server, the server logs the raw request details (IP address, User-Agent, payload size) before any front-end code is even loaded. This guarantees 100% visibility into every entity—human or machine—that interacts with your domain.

Step 2: Edge-Level Bot Filtering (Cloudflare Workers)

To separate your data streams, we recommend deploying Edge Functions (using Cloudflare Workers or Vercel Edge Middleware).

When a request arrives at the edge of your network, the Worker inspects the `User-Agent` and the request behavior.

This immediately cleanses your primary GA4 dashboard of synthetic traffic, restoring the accuracy of your human engagement metrics.

Step 3: Log File Analysis (The Ultimate Truth)

Even with Edge filtering, some rogue scrapers will slip through by spoofing human browsers. The only infallible source of truth in 2026 is Log File Analysis.

Your DevOps and SEO teams must collaborate to run weekly analyses of your raw server access logs. Tools like Splunk or ELK stack (Elasticsearch, Logstash, Kibana) can visualize these logs. By analyzing server logs, you can see exactly which URLs are being hit hardest by Google's new LLM crawlers, providing a leading indicator of which pages are about to be featured in AI Overviews.

Part 4: Honeypotting—Managing the Parasites

Now that you can *see* the Synthetic Traffic, you must control it. You want to welcome the Answer Engines (Tier 1) and the Transactors (Tier 2), but you must ruthlessly block the Parasites (Tier 3) that are stealing your content to train competing models.

The "Defensive `robots.txt`" is Not Enough

Many brands tried to solve this in 2024 by simply adding `Disallow: /` for `CCBot` or `Bytespider` in their `robots.txt` file.

Rogue scrapers ignore `robots.txt`. It is a polite request, not a firewall.

Enter "Honeypotting" and WAF Rules

To protect your brand's Information Gain, you must implement Web Application Firewall (WAF) rules combined with Honeypotting.

1. Rate Limiting by ASN: Parasitic scrapers often originate from specific cloud hosting providers (AWS, DigitalOcean, Alibaba Cloud) rather than residential ISPs. Set aggressive rate limits on traffic coming from known data center ASNs. A human might read 3 pages a minute; a scraper reads 300. Block the spike.

2. The Hidden Link Honeypot: Place an invisible link in the DOM of your website (using `display: none`) that leads to a hidden, disallowed directory. Humans will never see or click this link. Rogue scrapers, which blindly crawl every `href` tag, will follow it. The moment an IP address hits that hidden directory, your server permanently blacklists the IP.

3. Allowlisting the Citations: Explicitly allowlist the IP ranges and verified User-Agents of the AI systems that actually drive business value (OpenAI, Google, Anthropic, Apple).

Part 5: The ThynkUnicorn 2026 Analytics Protocol

If your agency or internal team is not actively managing the Synthetic Web, you are operating blindly. Here is the checklist every enterprise marketing team must implement this quarter:

The Future is Bifurcated

The internet is no longer a human-only playground. By the end of this decade, the majority of web requests will be generated by autonomous software.

The brands that win won't be the ones that try to force AI agents to behave like humans. The winners will be the brands that build two perfectly optimized experiences: a beautiful, sticky interface for human psychology, and a lightning-fast, highly structured data layer for machine ingestion.

Is your data compromised by Synthetic Traffic? Contact the ThynkUnicorn data science team today for a comprehensive Server-Side Analytics Audit and Edge Firewall configuration.