LLM Reputation Management: The 2026 Guide to AI Hallucination Defense

Priyesh Dhaduk

Head of Technology

Online Reputation Management (ORM) used to be simple: if a negative article appeared, you built backlinks to positive articles to push the bad PR to page two of Google.

In 2026, the game has fundamentally changed. What happens when ChatGPT hallucinates that your SaaS company had a catastrophic data breach, or that a partner at your law firm was disbarred?

You cannot issue a DMCA takedown to a neural network. The false information isn't a URL; it is baked into the model's weights. To defend your brand, you must pivot to LLM Reputation Management (AI-ORM).

The Threat: Hallucination as Fact

Large Language Models (LLMs) are predictive text engines. They don't "know" facts; they predict the next most logical word based on their training data. If your brand's digital footprint is thin, the AI will fill the gaps with plausible, but potentially devastating, hallucinations.

Because Answer Engines (like Google Gemini and Perplexity) present their outputs as authoritative facts, users rarely verify the claims. An AI hallucination can destroy enterprise deals and local reputations overnight.

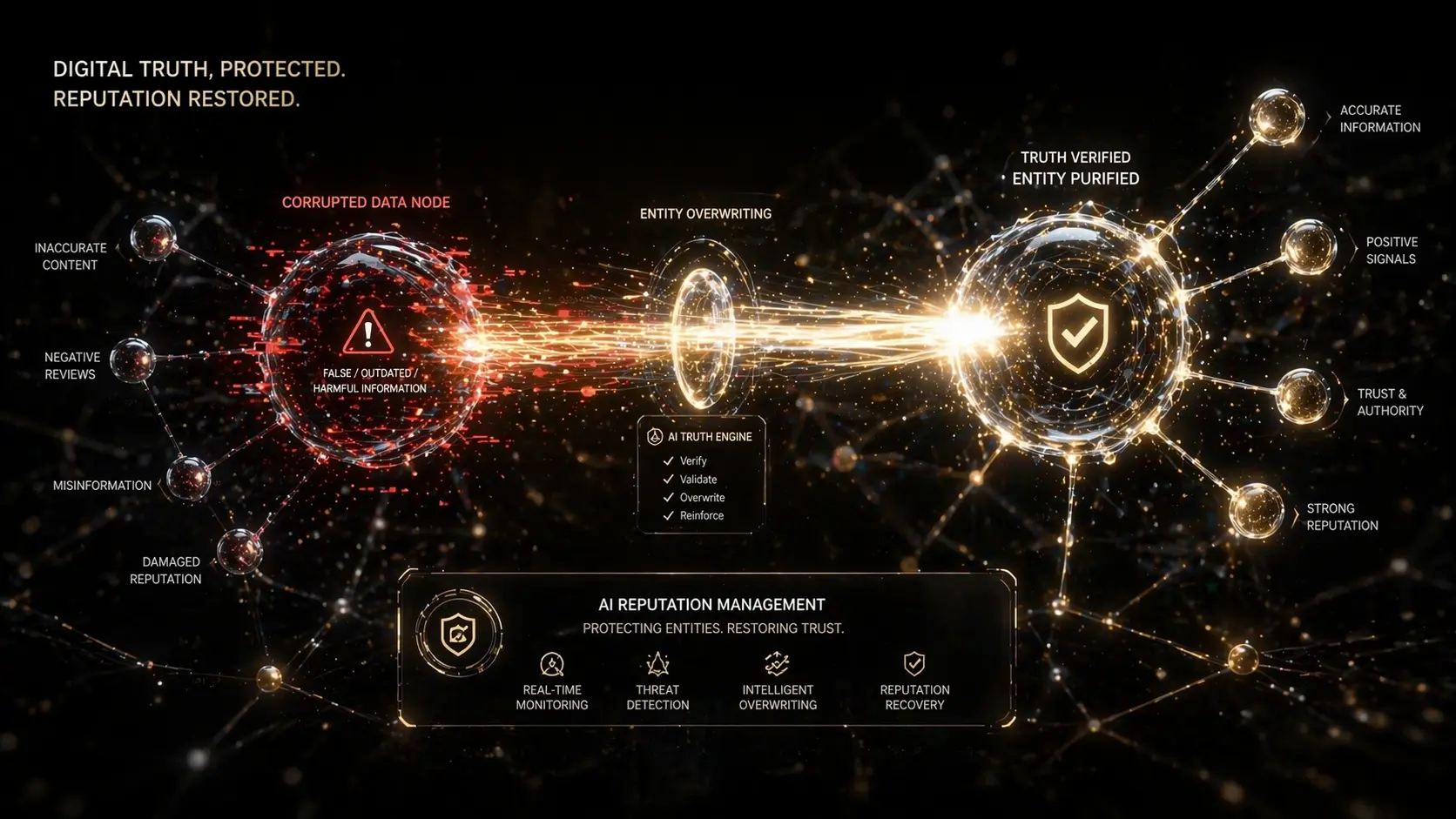

The ThynkUnicorn Strategy: "Entity Overwriting"

You cannot delete an AI's memory. Instead, you must aggressively overwrite it by flooding the Knowledge Graph with contradictory, high-authority signals. We call this Entity Overwriting.

1. The "Seed Site" Strategy

AI models do not treat all websites equally. They heavily weight their training data toward highly trusted "Seed Sites" (e.g., Wikipedia, Bloomberg, Forbes, Crunchbase, and major PR wires).

If an LLM is hallucinating your pricing model, publishing a blog post on your own site isn't enough. You must plant the correct, factual entity definitions in these specific seed sites.

2. Defensive `llms.txt`

We previously discussed using the `llms.txt` file to guide AI agents to your best content. In AI-ORM, this file acts as a defensive shield.

3. Automated Prompt Auditing

You cannot fix a hallucination if you don't know it exists.

Protect the Knowledge Graph

Your brand's reputation is no longer what Google search results say it is; it is what the AI synthesizes. Defend your entities, own your narrative on Seed Sites, and never leave an AI to guess what your brand stands for.